Imputations, and this method can be used to estimate the R 2 for an MI model. As mentionedĪbove, the MI estimate of a parameter is typically the mean value across the R 2 is (among other things) the squared correlation (denoted r) between the observed and expect values of theĭependent variable, in equation form: r = sqrt(R 2). Individual imputations (sometimes called the within imputation variance) and the degree to which theĬoefficient estimates vary across the imputations (the between imputation variance).įor more information on multiple imputation, see the “See also” section at the

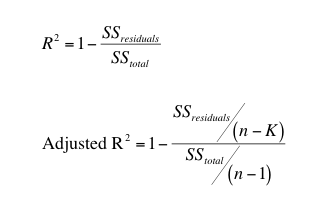

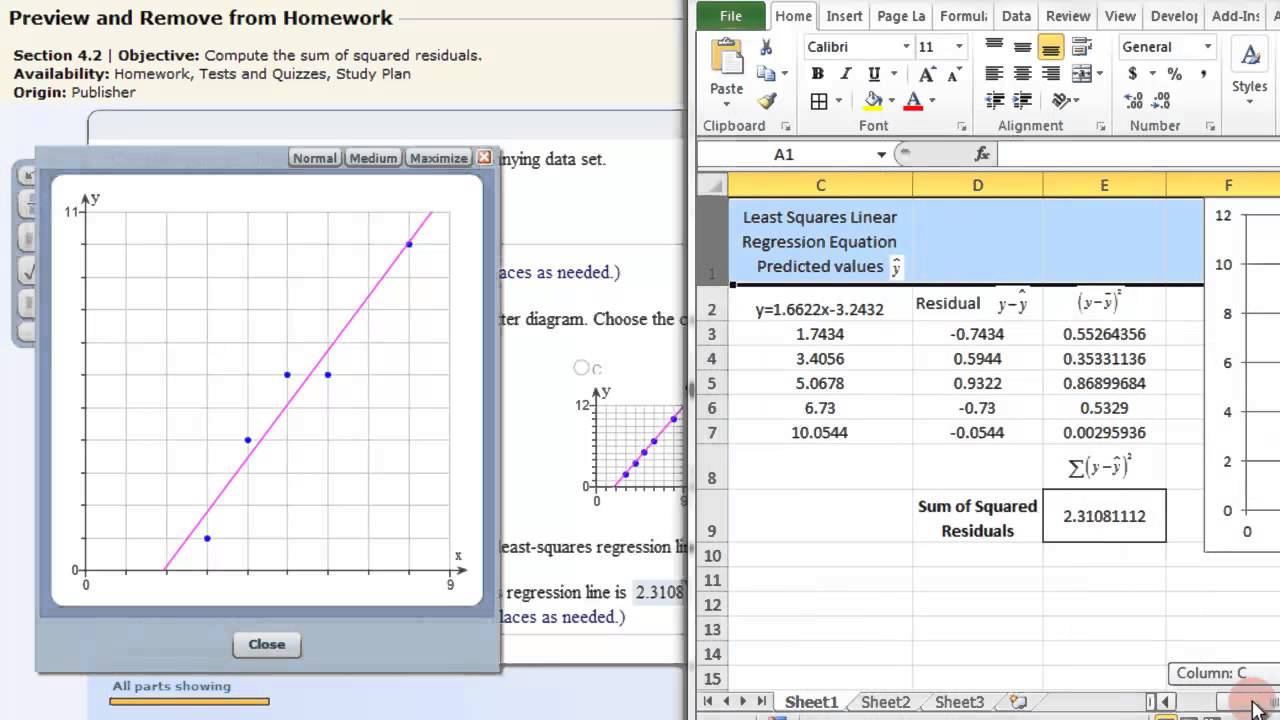

Parameter is calculated based on the standard error of the coefficient in the The MI estimate of the standard error of a a regression coefficient) is the average of the estimatedĬoefficients from the MI datasets. Without going into detail, the MI estimate ofĪ parameter (e.g. Intervals (based on Harel 2009), while mibeta does not. TheĬode to calculate the MI estimates of the R 2 and adjusted R 2Ĭan be used with earlier versions of Stata, as well as with Stata 11.Īdditionally, the code to calculate R 2 and adjusted R 2 “by Note that mibeta uses the mi estimateĬommand, which was introduced in Stata 11. Using the user-written command mibeta, as well as how to program theseĬalculations yourself in Stata. Below we show how to estimate the R 2 and adjusted R 2 Whatever your view, if you choose to use R-squared to inform your data analysis, it would be wise to double-check that it's telling you what you think it's telling you.R 2 and adjusted R 2 are often used to assess the fit of OLS regression ("I have never found a situation where it helped at all.") No doubt, some statisticians and Redditors might disagree. So is there any reason at all to use R-squared? Shalizi says no. And it should be noted that adjusted R-squared does nothing to address any of these issues. Shalizi gives even more reasons in his lecture notes. R-squared does not measure how one variable explains another.Īnd that's just what we covered in this article.R-squared does not allow you to compare models using transformed responses.R-squared does not measure predictive error.R-squared does not measure goodness of fit.This is another instance where plotting your data is strongly advised. Why not just use correlation instead of R-squared in this case? But then again correlation summarizes linear relationships, which may not be appropriate for the data. We then "apply" this function to a series of increasing \(\sigma\) values and plot the results. Notice the only parameter for sake of simplicity is sig (sigma). The way we do it here is to create a function that (1) generates data meeting the assumptions of simple linear regression (independent observations, normally distributed errors with constant variance), (2) fits a simple linear model to the data, and (3) reports the R-squared. Shalizi's statement is easy enough to demonstrate. It can be arbitrarily low when the model is completely correct. R-squared does not measure goodness of fit. Now let's take a look at a few of Shalizi's statements about R-squared and demonstrate them with simulations in R.ġ. Here's a quick example using simulated data: In R, we typically get R-squared by calling the summary function on a model object. Shalizi, however, disputes this logic with convincing arguments. Given this logic, we prefer our regression models to have a high R-squared. So an R-squared of 0.65 might mean that the model explains about 65% of the variation in our dependent variable. It ranges in value from 0 to 1 and is usually interpreted as summarizing the percent of variation in the response that the regression model explains. In case you forgot or didn't know, R-squared is a statistic that often accompanies regression output. It all begins in Section 3.2 of his Lecture 10 notes.

Shalizi provides free and open access to his class lecture materials, so we can see what exactly he was "ranting" about.

It turns out the student's stats professor was Cosma Shalizi of Carnegie Mellon University. On Thursday, October 15, 2015, a disbelieving student posted on Reddit: My stats professor just went on a rant about how R-squared values are essentially useless, is there any truth to this? It attracted a fair amount of attention, at least compared to other posts about statistics on Reddit.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed